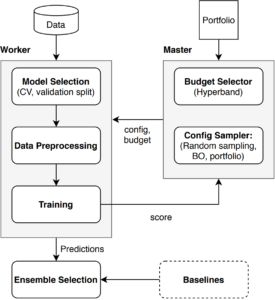

While early AutoML frameworks focused on optimizing traditional ML pipelines and their hyperparameters, another trend in AutoML is to focus on neural architecture search. To bring the best of these two worlds together, we developed Auto-PyTorch, which jointly and robustly optimizes the network architecture and the training hyperparameters to enable fully automated deep learning (AutoDL). Auto-PyTorch achieved state-of-the-art performance on several tabular benchmarks by combining multi-fidelity optimization with portfolio construction for warmstarting and ensembling of deep neural networks (DNNs) and common baselines for tabular data.

The API is inspired by auto-sklearn and only requires a few inputs to fit a DL pipeline on a given dataset:

>>> autoPyTorch = AutoNetClassification("tiny_cs", max_runtime=300, min_budget=30, max_budget=90) >>> autoPyTorch.fit(X_train, y_train, validation_split=0.3) >>> y_pred = autoPyTorch.predict(X_test) >>> print("Accuracy score", sklearn.metrics.accuracy_score(y_test, y_pred))

If you are interested in Auto-PyTorch, you can find our open-source implementation here:

References

- Lucas Zimmer, Marius Lindauer and Frank Hutter: Auto-PyTorch Tabular: Multi-Fidelity Meta Learning for Efficient and Robust AutoDL In: IEEE Transactions on Pattern Analysis and Machine Intelligence. 2021

- Mendoza, Hector and Klein, Aaron and Feurer, Matthias and Springenberg, Jost Tobias and Urban, Matthias and Burkart, Michael and Dippel, Max and Lindauer, Marius and Hutter, Frank: Towards Automatically-Tuned Deep Neural Networks In: AutoML: Methods, Sytems, Challenge. 2019