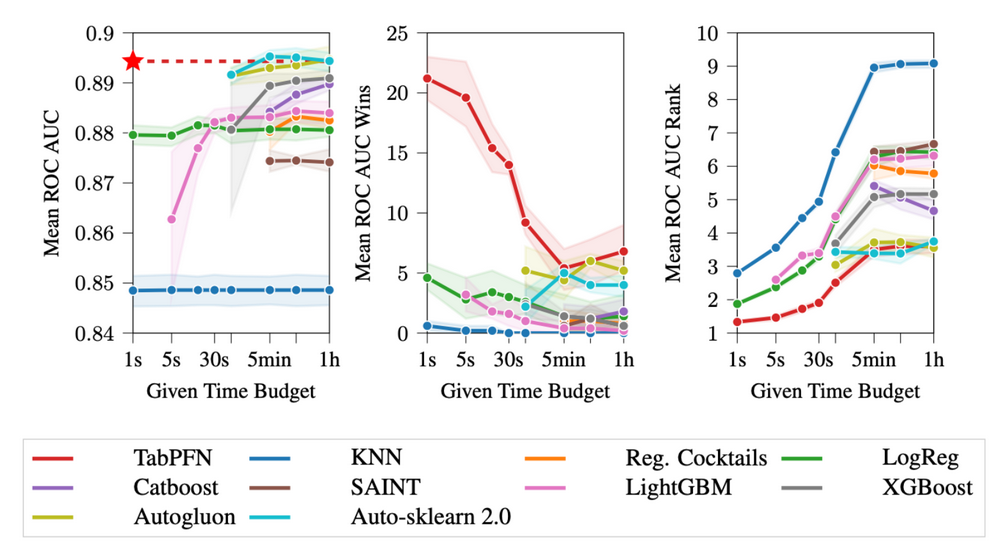

Imagine a world where you could get incredibly accurate predictions in the blink of an eye. It may sound like a dream, but we’re actively working to make it a reality with our project called TabPFN. TabPFN can already perform SOTA-level classifications for small numerical problems (up to 1000 training examples, 100 features, and 10 classes) in half a second.

How do we achieve this? We meta-train a Transformer variation (a PFN) to learn to do classification on artificial datasets. This method actually provably converges to a Bayesian solution to the problem by ensembling infinitely many models.

We are pushing the work hard to overcome the current limitations and to generalize to settings other than traditional supervised learning.